Artificial Intelligence Disruption: Guide for Executives

May 18, 2026 in Guide: Explainer

Turn artificial intelligence disruption into growth. This executive guide offers actionable frameworks to transform threats into strategic opportunities.

Not a member? Sign up now

Learn how to tell your model to pay attention to outliers

Paulo Maia on Nov 14, 2022

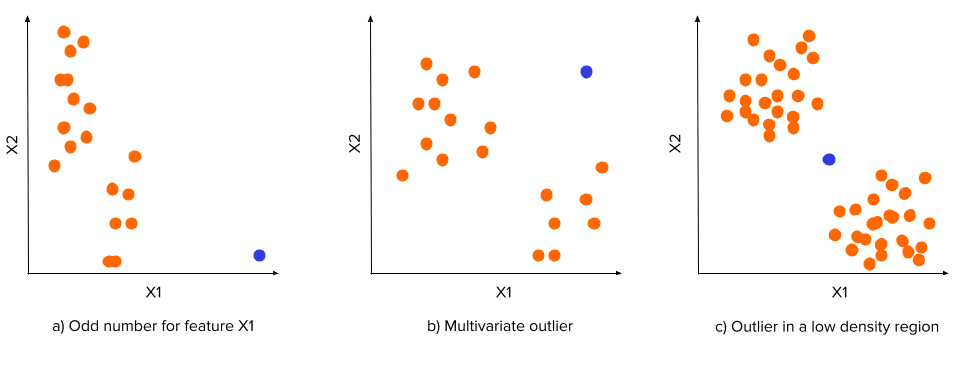

Outliers are data points that stand out for being different from the remaining data distribution. An outlier can be:

If so, be careful! Outliers do not respect the data distribution, so you should not pretend they do by removing inconvenient data points or transforming their features to become closer to the remaining data distribution. I know you need to handle them to avoid nonsensical values disturbing your pipeline. Still, you can also let the model know those data points are outliers instead of ignoring that information. The two main questions here are: Why? and How?

Why? Because you never know the cause of an outlier. It can represent either an error in the data acquisition process or a real anomaly in your population. This is highly relevant when dealing with Fraud Detection, Predictive Maintenance, or Compliance Validation situations. In these use cases, you want to detect the odd values (outliers) to prevent further risks.

How? Creating new features that represent how odd a data point is. If you make this information clear to the model, it will be able to detect the outliers by itself. But if outliers can be of different forms (as seen in the figure above), what features can represent their oddness? Find the answer in the next section!

You can use different distance algorithms to compute how far a data point is from the centroid. We recommend the Mahalanobis distance since it is better to deal with multivariate outliers resulting from unusual combinations between multiple variables. For example, consider these three variables: weight, height, and gender. A height of 150 cm is not that unusual for the Portuguese female population, and a weight of 90kg is not uncommon for the Portuguese male. However, a female Portuguese with 150 cm and 90 kg would be very unique.

Previously we saw a feature that measures the distance to the centroid, but what if the data distribution has a shape like the one in the figure below?

In this case, an outlier can be a data point located in the regions where the density distribution has a depression, no matter the distance to the centroid. So a good feature to represent it would be the data density in the neighborhood of the data point.

You can use methods like KDE (Kernel Density Estimation) to estimate the density. However, this method can be too computationally expensive. So we propose a more straightforward and cheaper method: binning.

There are two ways of using binning to estimate density distribution:

Training an autoencoder with your data will let the encoder learn the data distribution of the different variables and their relationship. Then, when the autoencoder receives a data point that deviates from the remaining data, it won’t be able to reconstruct the data point correctly.

A good feature to represent outliers would be the distance between the input, X, and the output, X’ (e.g., cosine distance). Higher distances will be correlated with odder data points.

Book a meeting with Rafael Cavalheiro

Meet Rafael Learn MoreNow that you know how to detect outliers, you have a new trick to detect possible frauds, anomalies, or errors without needing to collect data for all those exceptions. Here, we presented you with three different ways to do so.

For more ideas on how to get the most out of your data, subscribe to our newsletter below and stay tuned.

Like this story?

Special offers, latest news and quality content in your inbox.

May 18, 2026 in Guide: Explainer

Turn artificial intelligence disruption into growth. This executive guide offers actionable frameworks to transform threats into strategic opportunities.

May 11, 2026 in Industry Overview

Explore 10 powerful recommender systems examples from e-commerce, media, finance, and more. See how businesses use AI to drive growth and how you can too.

May 4, 2026 in Guide: Explainer

Learn what is strategic innovation and how to implement it. Our guide covers frameworks, KPIs, AI use cases, and an actionable roadmap for enterprise growth.

| Cookie | Duration | Description |

|---|---|---|

| cookielawinfo-checkbox-analytics | 11 months | This cookie is set by GDPR Cookie Consent plugin. The cookie is used to store the user consent for the cookies in the category "Analytics". |

| cookielawinfo-checkbox-functional | 11 months | The cookie is set by GDPR cookie consent to record the user consent for the cookies in the category "Functional". |

| cookielawinfo-checkbox-necessary | 11 months | This cookie is set by GDPR Cookie Consent plugin. The cookies is used to store the user consent for the cookies in the category "Necessary". |

| cookielawinfo-checkbox-others | 11 months | This cookie is set by GDPR Cookie Consent plugin. The cookie is used to store the user consent for the cookies in the category "Other. |

| cookielawinfo-checkbox-performance | 11 months | This cookie is set by GDPR Cookie Consent plugin. The cookie is used to store the user consent for the cookies in the category "Performance". |

| viewed_cookie_policy | 11 months | The cookie is set by the GDPR Cookie Consent plugin and is used to store whether or not user has consented to the use of cookies. It does not store any personal data. |