Understanding What Is Social Impact Assessment

May 25, 2026 in Guide: Explainer

Explore what is social impact assessment: why SIA matters for business, key steps, and how AI can transform your real-world impact measurement.

Not a member? Sign up now

How to create value from mediocre models

Paulo Maia on Apr 10, 2023

What do you do when the model is underperforming? When the models’ performance does not meet our expectations, we usually spend time searching for the flaws, selecting and analyzing the cases where it failed to understand why it happened. Then, we try to apply more robust solutions, train, test, and repeat. In some cases, we succeed but in others, the model’s performance does not increase, no matter how hard we try.

What to do? The temptation to give up increases with the number of failed attempts. Since trying to fix the model’s defects didn’t lead to success, what about doing the opposite? Try to focus on the cases where your model succeeds. Select those cases, analyze them, and measure the value they contain. Do not toss your model into the garbage bin because it is missing some cases. Instead, take advantage when it gets them right!

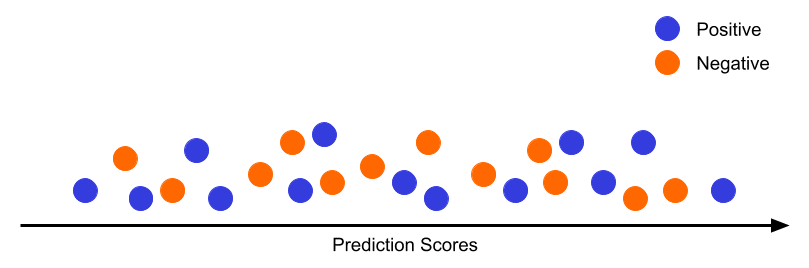

First of all, analyze your predictions! When the model is underperforming, the predictions might be distributed in two ways:

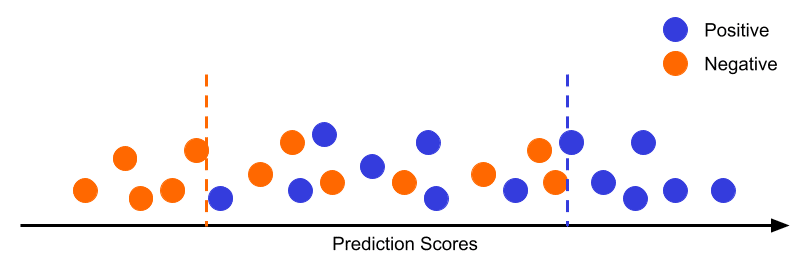

The tails analysis should focus on two main factors:

If Hero and Sidekick KPIs are new concepts to you, check our Data Ignite Course.

Learn More

The viability of this approach depends on how you are going to integrate AI into your business. Integration of the type filtering, where the AI is used to reduce the workload that is passed to a human, any inclusion rate higher than 0% can be profitable. However, an integration of the type replacing, where the aim is to replace an existing process with an AI system, might require a higher inclusion rate to become profitable. But, when can we use it in practice?

In Healthcare, most of the Use Cases of diagnosis support are of the type filtering. Making an autonomous AI system for disease diagnosis can be very challenging or even unrealistic since in a lot of cases specialists’ opinions are not unanimous. With filtering the AI is able to screen a small segment of patients, but with high confidence in the decision, while the remaining patients are forwarded to a doctor.

Hot and cold leads are a type of use case where the hottest and coldest leads are identified to further play action on those leads or on the remaining. For example, if you are too sure that a segment of leads is going to churn, no matter what, you might avoid investing in customer service on those leads/clients. On the other hand, if you’re too sure that a lead is going to convert, you don’t need to invest more resources to convince them, or you could already think of a strategy to upsell other products. Since these use cases depend on segment identification, identifying the tails is a useful and profitable strategy.

Recommendation Systems are another type of use case that can benefit from this approach. Usually, the models have a good performance when they have a history of the client but they tend to fail on new customers, without action history – cold start. When this happens, select the customer segment for which the model has a good performance and start there.

If you think this solution is not profitable enough for your business, don’t think of it as the end of the road but as the road itself. After selecting the segment where your AI is reliable, you can put the solution into production and use the cash flow it is returning to invest in data acquisition, data labeling, and deeper model exploration. This way, you’ll need an initial investment to create a simple solution and the remaining investigation process can be supported by itself.

So, keep in mind:

Like this story?

Special offers, latest news and quality content in your inbox.

May 25, 2026 in Guide: Explainer

Explore what is social impact assessment: why SIA matters for business, key steps, and how AI can transform your real-world impact measurement.

May 18, 2026 in Guide: Explainer

Turn artificial intelligence disruption into growth. This executive guide offers actionable frameworks to transform threats into strategic opportunities.

May 11, 2026 in Industry Overview

Explore 10 powerful recommender systems examples from e-commerce, media, finance, and more. See how businesses use AI to drive growth and how you can too.

| Cookie | Duration | Description |

|---|---|---|

| cookielawinfo-checkbox-analytics | 11 months | This cookie is set by GDPR Cookie Consent plugin. The cookie is used to store the user consent for the cookies in the category "Analytics". |

| cookielawinfo-checkbox-functional | 11 months | The cookie is set by GDPR cookie consent to record the user consent for the cookies in the category "Functional". |

| cookielawinfo-checkbox-necessary | 11 months | This cookie is set by GDPR Cookie Consent plugin. The cookies is used to store the user consent for the cookies in the category "Necessary". |

| cookielawinfo-checkbox-others | 11 months | This cookie is set by GDPR Cookie Consent plugin. The cookie is used to store the user consent for the cookies in the category "Other. |

| cookielawinfo-checkbox-performance | 11 months | This cookie is set by GDPR Cookie Consent plugin. The cookie is used to store the user consent for the cookies in the category "Performance". |

| viewed_cookie_policy | 11 months | The cookie is set by the GDPR Cookie Consent plugin and is used to store whether or not user has consented to the use of cookies. It does not store any personal data. |